Far too many products still fail because there’s simply no demand for them. How does that happen? Or, how do so many startups launch entire businesses without realizing users didn’t need their products?

By overlooking user research.

Every tech company has to build and ship new products to continue growing. And, whether there are 10 users or 10 million users, the key to building successful products is understanding who the users are and what they want. User research is the unsung hero of determining a product’s viability and the secret ingredient to its success.

And while an Amplitude or Mixpanel account is often the first software license purchased by a startup, these same startups often neglect to implement user research software or processes. This is despite the fact that product managers at these companies spend roughly 30% of their time on user research related activities. If knowing what users are doing within the product is so important, knowing the why is even more essential: It guides the follow-on decisions that make or break product experiences.

Before founding Sprig, I was a product manager at five different successful startups, and I learned firsthand how user research can guide the right product decisions. What became clear to me, however, is that although most founders and product teams already know research is important and impactful, not all product teams understand how to prioritize user research in the early stages of company building (especially given the breakneck pace of a startup) or how to evolve a research function as a company grows. This article provides a blueprint for investment in research at every stage, so teams can focus work on the right products and features, and build them with their users in mind.

What is user research?

User research is the process of studying users’ needs, customer journeys, pain points, and processes through questions, surveys, observation, and other methods. Good user research goes beyond simple feedback, adding structure and process to gathering insights from the right users. Anyone can ask questions. The key is knowing how to ask the right questions to help guide the product roadmap and having the right tools to get answers efficiently and effectively.

There are multiple types of research that suit different situations. Understanding when to use each method is an important first step in creating a research program. Here are a few of the different research methods and categories:

Strategic vs. tactical

Tactical research answers the questions that help move the business forward today. For example, “What should we name this new feature?” or “Which product concept is more effective at driving the desired action?” Strategic research looks at long-term initiatives, such as “Should we expand into a new market?” or “Is there enough demand to layer on a new persona?”

Most startups begin with tactical research and move on to strategic research over time. Tools like in-product surveys can enable quick answers to tactical questions about an existing product, such as why users are dropping out of onboarding or not engaging with a new feature.

Moderated vs. unmoderated

Moderated research requires time and energy to supervise the user, while unmoderated research allows users to provide feedback on their own. Moderated research gives rich detail and the ability to ask follow-up questions, so it’s useful when deciding larger, strategic decisions and there are still a variety of unknowns. Moderated studies are ideal for strategic questions, like “Should we build this new product area?”, or to glean insights into a new persona.

Alternatively, unmoderated studies are best for tactical questions, when an unbiased perspective is valuable. Use unmoderated research to understand if a product concept makes sense to users or gauge overall satisfaction with a new feature.

While most moderated sessions are fine to conduct via Zoom, unmoderated research tools can help teams get more insights with less effort, and answer critical questions much more quickly.

Quantitative vs. qualitative

Quantitative research answers questions about customers’ attitudes and behaviors — at scale and across measurable metrics — while qualitative research goes in depth with a smaller group of people to understand the why behind those attitudes and behaviors.

However, techniques such as in-product surveys can also enable teams to generate qualitative insights at the scale of quantitative surveys. This is particularly helpful when, for example, teams glean a new insight from a user interview but aren’t sure if a statistically significant portion of users express the same sentiment. Instead of wasting weeks of valuable development time exploring the issue, an in-product survey can quantify the demand and provide direction in a few days.

While all research is not available — or even practical — at all times, it’s useful to create a plan early to determine research investment at future growth stages. This helps allocate resources and de-risk decisions from Day 1, and sets the stage for user-driven growth.

How research can fuel the right decisions at every stage of growth

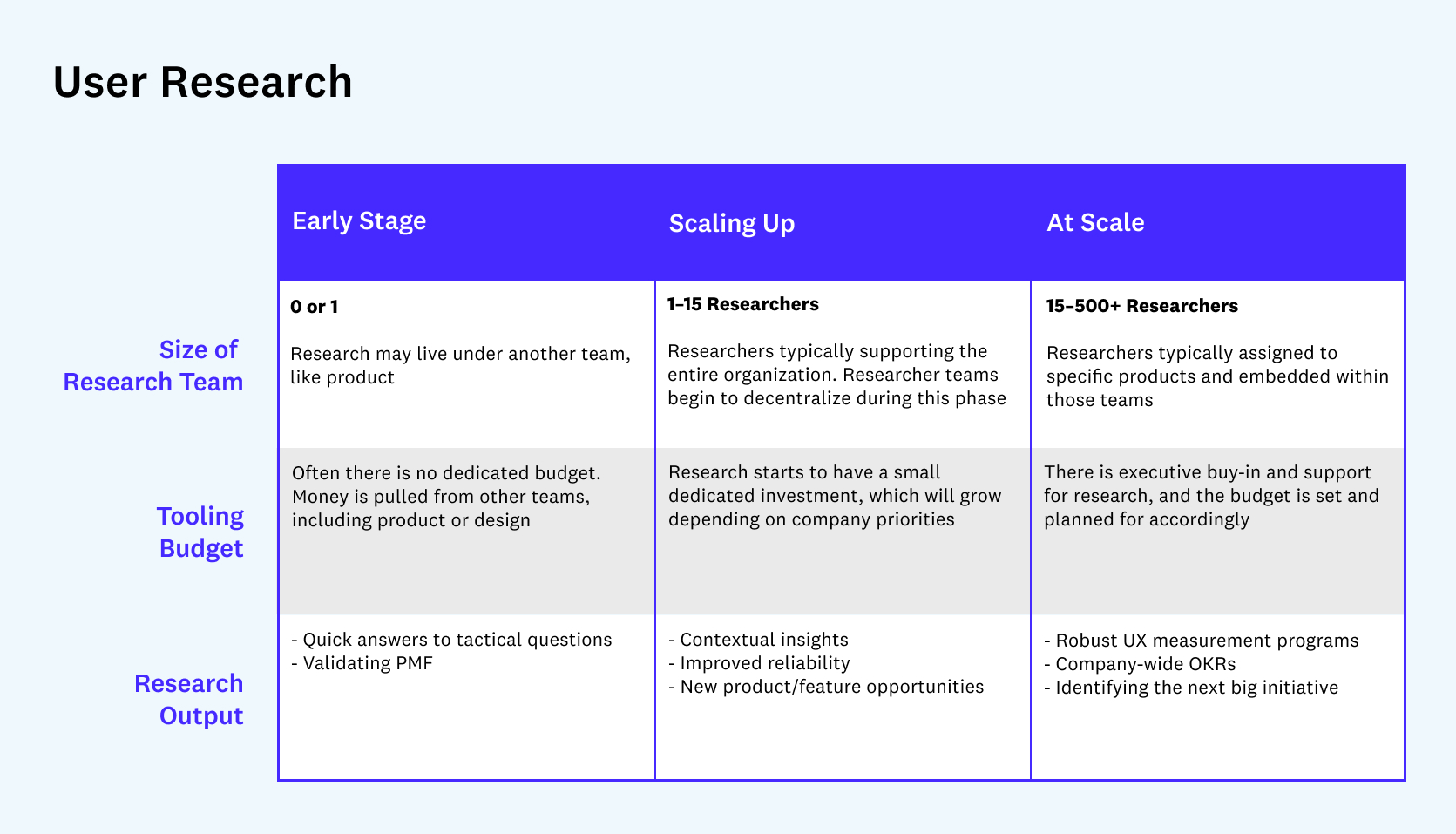

Early stage teams may be aware of user research, but might not know where to start. Companies already starting to scale up may start to wonder about best practices for integrating research into a growing organization, or how to retrofit a research function into a product and design team that’s been conducting some form of research on their own already.

Here’s how to face those challenges and get started on the path to become user-driven.

Early stage

At this stage, companies don’t usually have bandwidth for a user research-specific hire, so they need to embrace tools and technology that allow small teams to conduct meaningful research on their own. The goal is to make decisions that use capital wisely, and only prioritize those decisions that users actually want — both pre- and post-launch.

Teams might be surprised at how much clarity a short survey can provide into the ever-elusive product-market fit question. A simple 4–question survey can help indicate product-market fit, even with just 100 beta users. As a rule of thumb, if 40% of users or more say they would be very disappointed if your product did not exist, then there is some level of product market fit. And if the survey indicates that there is no fit, the open-ended responses to questions like “How could we improve the product?” and “What are the primary benefits you recieve from the product?” will help guide your next iterations and ensure the product does not drift away from what users like best.

Research at this stage doesn’t need to be perfect, but it needs to provide a general signal on which direction to go. And at this size, most companies are still small enough to have individual conversations with users that lead to targeted, productive, and beneficial insights. Tooling at this stage should enable fast, unmoderated testing, and provide templates driven by best-practices.

Scaling up

As an organization grows, so does its research needs. Founders will feel the pull to create a dedicated user research team to address a growing number of questions regarding product decisions, the customer journey, and more. While a company is growing, the research team may grow into its own entity and, eventually, add a dozen or so researchers to the organization. At this point, a small research team can begin answering more strategic questions and enabling product owners and decision-makers to tackle some tactical research on their own.

This is the stage where a company needs to put into place more rigorous research practices, enabling teams to ask the right questions of the right users at the right time. That’s because during periods of high growth, small changes in flows like acquisition and onboarding can make a huge impact. And making the wrong decision can cause massive declines in new user growth and contribute to millions in lost revenue.

By simply learning from users in-product, teams can obtain a clear and reliable signal about what’s working, what’s not, and why. A well timed survey after users drop out of the onboarding flow or don’t convert from a trial to paid subscription can provide clear guidance on how to optimize those flows within hours, without waiting for the results of multiple A/B tests. In my experience, these are some of the most common and widely used surveys because they generate real results, fast.

At scale

Once a company reaches significant scale, usually post-IPO, user research can become a key competitive advantage. Whereas in earlier stages of company growth, speed to market and product-market fit will likely be the biggest drivers of success, companies operating at scale must optimize around the edges, and small changes matter much more.

At this point in a company’s journey, research is conducted systematically across the entire product lifecycle, and large teams of researchers work in lockstep with product teams to drive the right decision-making. At scale, it’s essential to continuously measure the user experience by capturing a variety of metrics and insights. These can range from simple surveys like Customer Satisfaction Score (CSAT) to customized measures that measure the product’s impact on business-specific KPIs or OKRs.

In companies like Meta and Google, this type of research enables teams to juxtapose user experience data alongside product analytics and financial data to ensure the company is making decisions that are in the best interests of both the company and customer. While this is not nearly the case at all at-scale companies, it’s the ideal state for organizations or companies aspiring to be customer-centric.

The larger a company gets, the more it must invest in tools that help it scale and disseminate research across the organization, in order to keep all teams aware of and aligned around the customer experience. The best organizations use tools that enable continuous user-experience measurement and benchmarking as they seek to understand the impact of new product development and prioritize across projects.

How to incorporate user research across the product development lifecycle

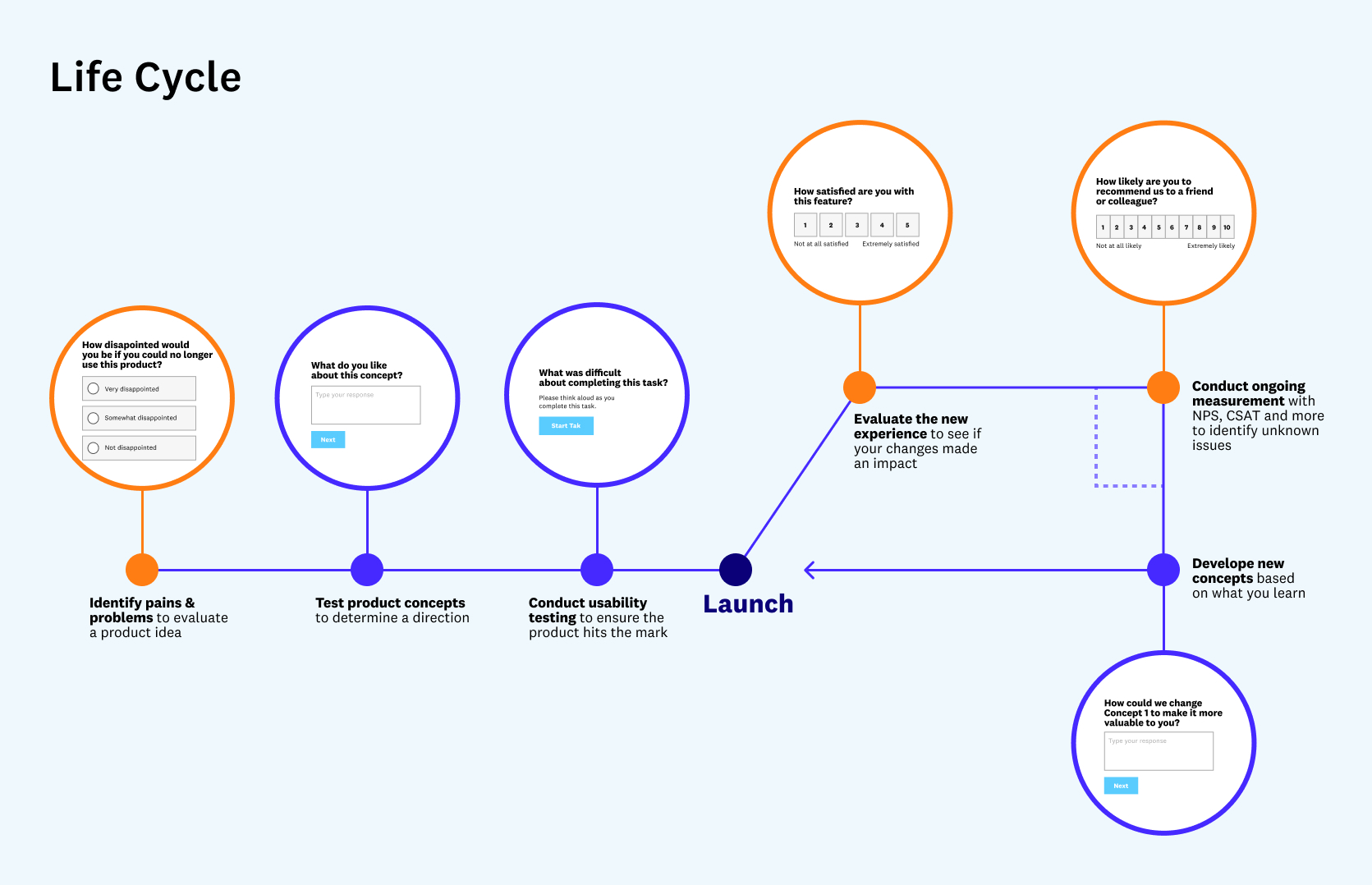

Of course, even the best-laid plans and well-developed strategies can fall victim to bad execution. So, how does user research actually play out in practice? Regardless of stage and size of the research team, the framework to incorporate user research across the product development lifecycle remains generally the same.

It starts with customer discovery. After identifying issues and settling on a direction, it’s on to concept testing and usability testing. Finally, after launching new features and functionality, it’s important to evaluate the effectiveness of those changes post-launch. The cycle continues and seeks to optimize growth initiatives, launch new features, improve product adoption, and more.

Here’s a breakdown of the type of research companies might conduct at each stage of the product development lifecycle.

Phase 1: Discovery research

Discovery research, also called exploratory research, identifies pain points before they become a problem in the live product. Or, if the product or feature has already launched, it can reveal issues that are preventing users from taking a desired action. Discovery research can help shorten the product lifecycle by avoiding unnecessary work later on and getting to the most effective solution in earlier iterations.

For example, I worked with a popular real-estate technology company that noticed onboarding drop-off was much higher than expected on its “Get a Quote” page. The team had a few options to understand why this was the case:

- Conduct a variety of A/B and multivariate tests to adjust content on the page and remove fields.

- Take an educated guess based on past learnings and assumptions.

- Conduct user research.

The first two options would take a few weeks to several months, and likely result in significant waste because only about 1 in 7 A/B tests results in a clear winner. By conducting user research with a few simple in-app surveys, the product team could go right to the source — and learn from users completing the “Get a Quote” page in real-time. In this case, the team learned that users were hesitant to provide their phone number so early in the quote process, and that many visitors to that page were not actually planning to get a quote at all. They were just shopping around and in a completely different part of their customer journey.

When the phone number field was removed from the page, the conversion rate increased 10% almost immediately.

Phase 2: Concept testing

Improving conversion and fixing critical growth funnels is only one way to use discovery research. More often, there will be several different concepts that address product issues, especially related to engagement and adoption. The goal is narrowing it down to one — quickly — and getting it right before investing significant time and resources into building.

Let’s say, for example, that while researching the “Get a Quote” experience in the example above, the team finds out that obtaining a mortgage is confusing for users and prevents them from moving forward in the home-buying process. The team comes up with some ideas to address the issue, and lands on an interactive mortgage calculator as the solution. That might be the best option, but it will require significant engineering and marketing resources to build and launch the feature.

This is why it’s important to de-risk the project by creating several product mock ups and testing them with users before starting to build. Unmoderated concept testing makes it easier to test a few options and gain insight into the viability of potential solutions. When testing multiple prototypes, limiting the options to two or three will lessen the cognitive load for test-takers.

Phase 3: Usability testing

With the most compelling mortgage calculator concept selected, it’s time to make sure the design actually works. With usability testing, participants complete a set of tasks, using either a prototype (sometimes called “prototype testing”) or a live website/app, to identify points of friction and surface opportunities to improve the user experience. Participants are asked to “think aloud” as they complete tasks, explaining what questions, hesitations, or challenges they are having. For the mortgage calculator, test questions might include, “Can you easily adjust your down payment?” or “Select your rate to be 30-year fixed.”

Best practices for conducting usability testing suggest including at least 5, and up to 50, participants. There’s no need to overcomplicate usability testing (it’s the simplest of the research techniques described in this article); the point is simply to make sure your design is functional and users can complete the intended actions.

Phase 4: Post-launch evaluation

User research is continuous — the work doesn’t end with a product launch. After launching new features and flows comes the job of measuring satisfaction and comparing the results with previous data to ensure the product changes worked as intended.

Coming back to the mortgage calculator example, we’d want to compare the metrics from the previous onboarding experience with the latest iteration. A team can run the same in-product survey before and after the new calculator is implemented to see if the enhancements are providing an improved experience and driving the right behaviors. The team might ask, “How confident are you in the results you recieved?” to help gauge if the calculator is delivering on its promise of improving buyer confidence. If not, open-ended responses will clearly outline why and what next steps should be.

It’s not uncommon for this process to be iterative, with multiple rounds of researching and solutioning.

Bonus: Continuous UX measurement

Not all research is related to a specific, identifiable business problem, such as poor onboarding conversion or a dip in engagement. As companies set up and scale up research, it’s beneficial to continuously monitor the user experience to identify unknown problems that aren’t already on the product team’s radar. This type of research doesn’t need to take a long time or be complex. Adding simple in-product surveys on common pages that measure net promoter score (NPS) and customer satisfaction score (CSAT) can lead to some of the most important “aha moments” within a business.

Back to phase 1: Redesign discovery research

And the cycle continues. With the continuous measurement and post-launch evaluation insights, an organization will continue to uncover new pain points. In business, and especially in tech, there are always new problems to solve — and they need to be solved quickly. This is especially true in the era of agile development, when — unlike the quarterly release schedules of a decade ago — teams are working on continuous release cycles and, in some cases, shipping products every few days. Companies that understand user research are in a much better position to keep up with all this change and keep their customers happy.

Views expressed in “posts” (including articles, podcasts, videos, and social media) are those of the individuals quoted therein and are not necessarily the views of AH Capital Management, L.L.C. (“a16z”) or its respective affiliates. Certain information contained in here has been obtained from third-party sources, including from portfolio companies of funds managed by a16z. While taken from sources believed to be reliable, a16z has not independently verified such information and makes no representations about the enduring accuracy of the information or its appropriateness for a given situation.

This content is provided for informational purposes only, and should not be relied upon as legal, business, investment, or tax advice. You should consult your own advisers as to those matters. References to any securities or digital assets are for illustrative purposes only, and do not constitute an investment recommendation or offer to provide investment advisory services. Furthermore, this content is not directed at nor intended for use by any investors or prospective investors, and may not under any circumstances be relied upon when making a decision to invest in any fund managed by a16z. (An offering to invest in an a16z fund will be made only by the private placement memorandum, subscription agreement, and other relevant documentation of any such fund and should be read in their entirety.) Any investments or portfolio companies mentioned, referred to, or described are not representative of all investments in vehicles managed by a16z, and there can be no assurance that the investments will be profitable or that other investments made in the future will have similar characteristics or results. A list of investments made by funds managed by Andreessen Horowitz (excluding investments for which the issuer has not provided permission for a16z to disclose publicly as well as unannounced investments in publicly traded digital assets) is available at https://a16z.com/investments/.

Charts and graphs provided within are for informational purposes solely and should not be relied upon when making any investment decision. Past performance is not indicative of future results. The content speaks only as of the date indicated. Any projections, estimates, forecasts, targets, prospects, and/or opinions expressed in these materials are subject to change without notice and may differ or be contrary to opinions expressed by others. Please see https://a16z.com/disclosures for additional important information.